How do astronomers measure distances in space? How can we possibly determine the distance to a nearby galaxy?

Astronomers regularly talk with confidence about how far away stars and galaxies are, but how is it possible to calculate such distances?

Astronomers measure the distance between objects in space using a tool called the ‘cosmic distance ladder’, which is a range of different interconnected techniques (see below).

One of the main methods of determining distance in space is to use standard candles: astronomical objects that have a consistent inherent brightness. The dimmer they appear to us compared to this true brightness, the further away they must be.

Among the most common standard candles is a type of exploding star called a Type 1a supernova, but this isn't the only way of measuring distance in the Universe.

Below are some of the most effective ways of working out how far away an object is in space.

For more mind-blowing cosmology, read our guide to the biggest objects in the Universe, find out how big the Solar System is or discover the science of the Kuiper Belt and the Oort Cloud.

Methods of measuring the Universe

Radar ranging

Measures Distances up to 1 billion km

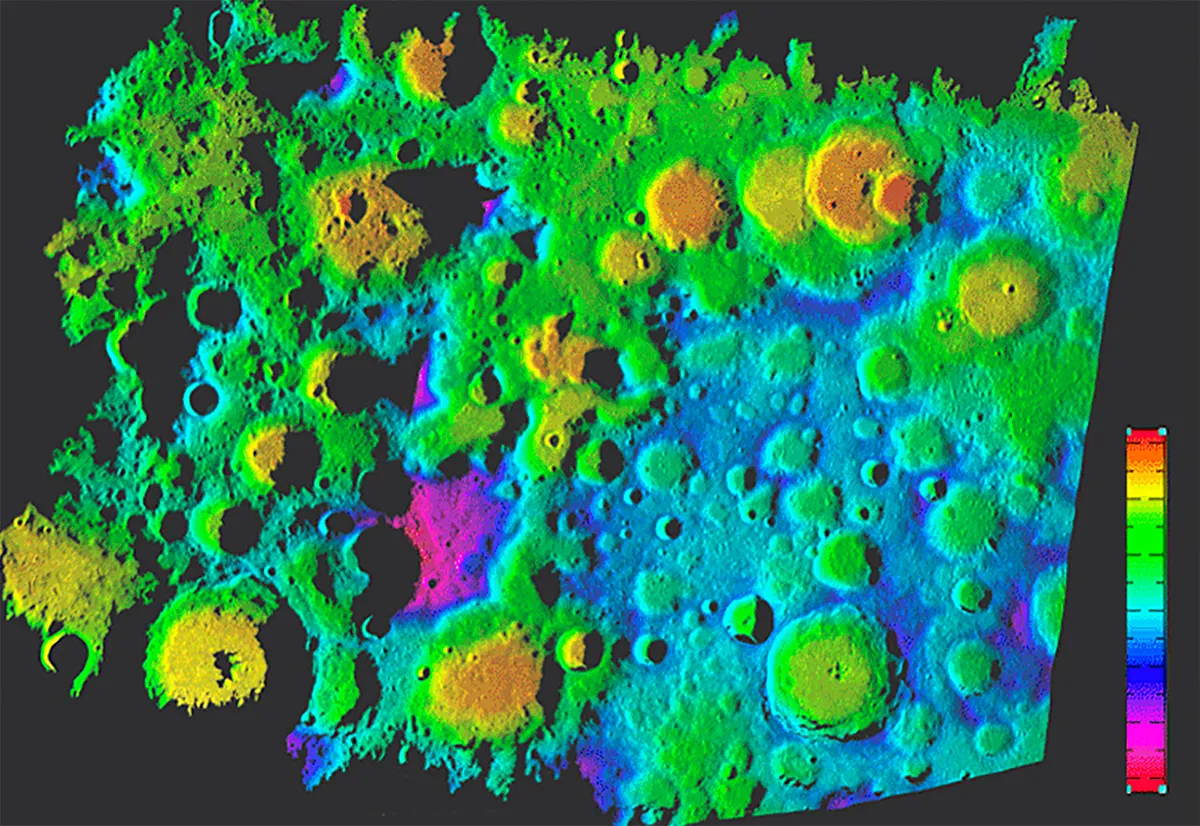

For distance measurements to objects in our Solar System (such as the Moon, above) we often bounce radio waves off their surfaces. The longer the waves take to return to Earth, the further away the object is.

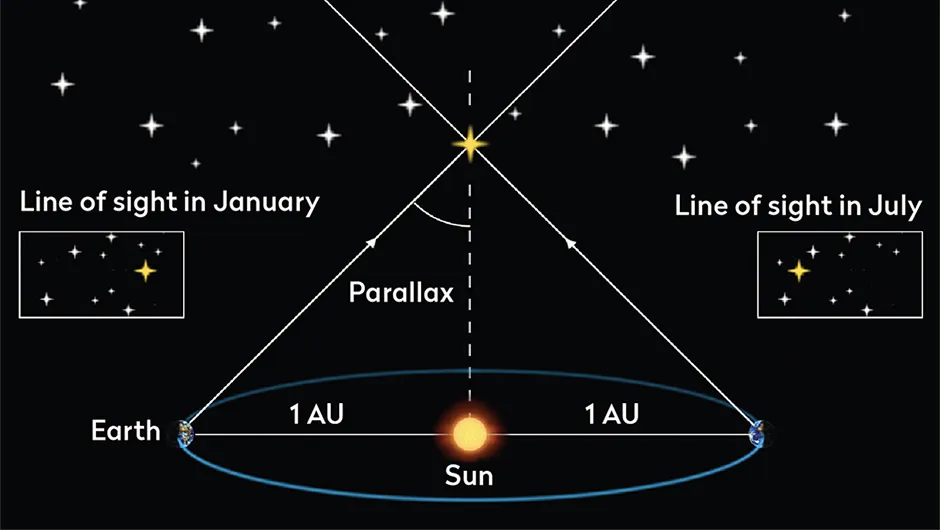

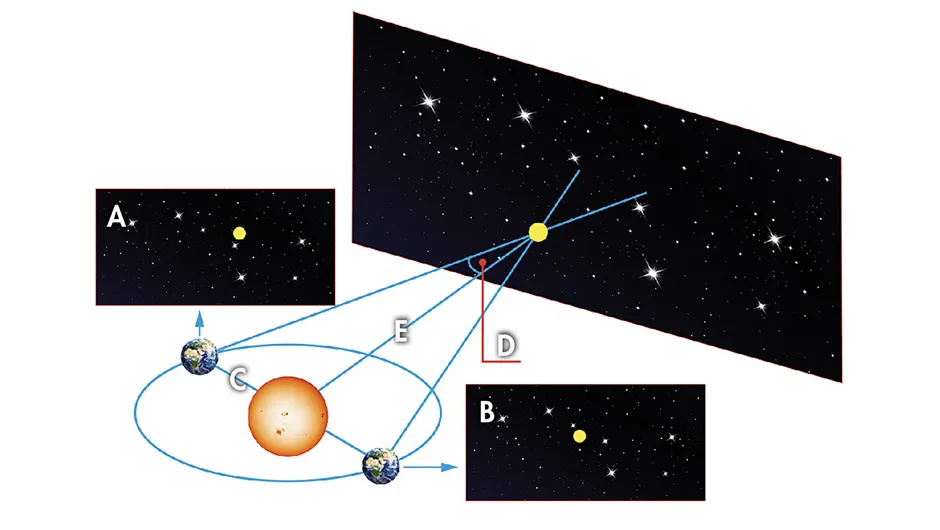

Parallax

Measures Up to 10,000 lightyears

Viewed six months apart, a foreground star appears to change position compared to one in the background. The closer the foreground one is to us the more it will jump, but beyond 10,000 lightyears away the change is too small to measure.

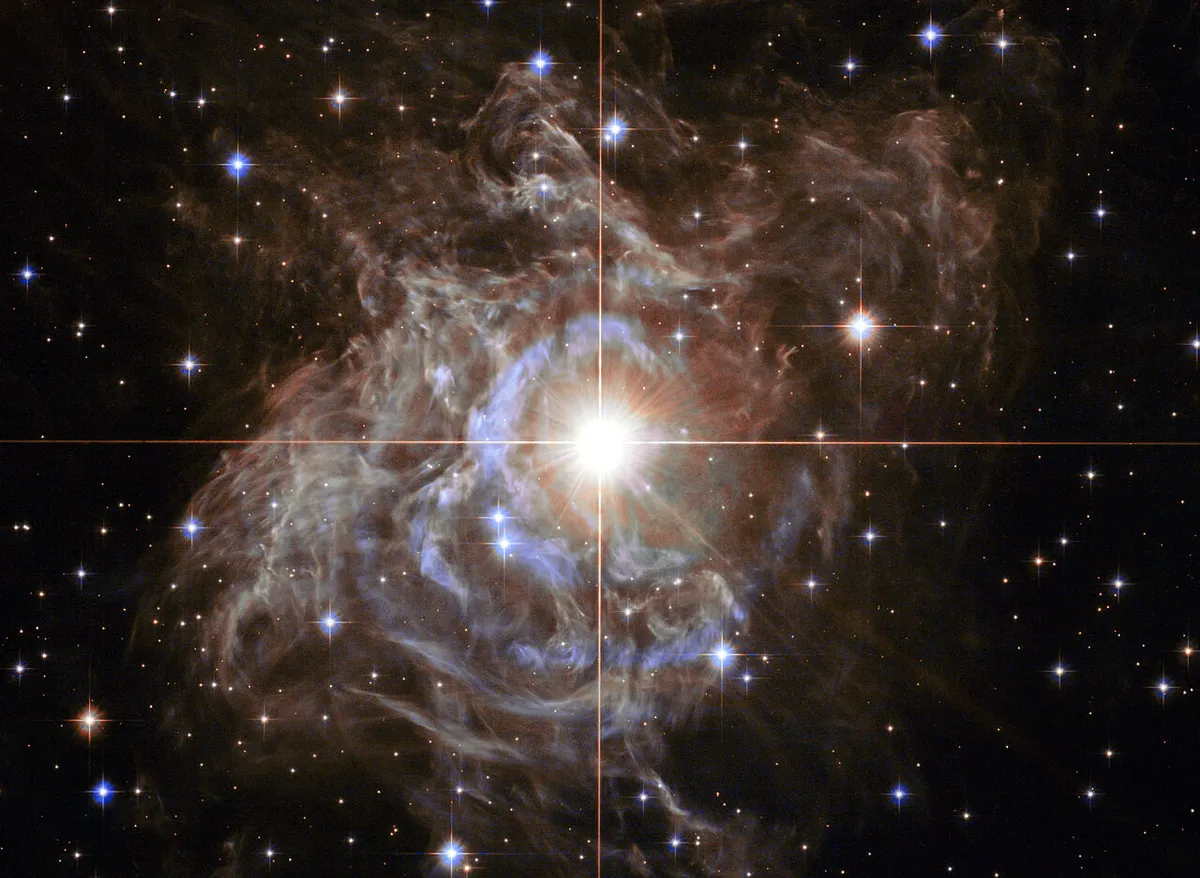

Cepheid variables

Measures Up to 100 million lightyears

These stars are a form of 'standard candle'. They expand and contract in a regular way, altering their brightness. This cycle is longer for brighter Cepheid variables, giving us a way to know their true brightness, and to measure distances to nearby galaxies.

Tully-Fisher relation (TFR)

Measures Up to 15 million lightyears

Brighter, more massive galaxies spin faster. We measure the rotation of a more distant galaxy by analysing its light spectrum. Like standard candles, the dimmer a galaxy appears compared to this true brightness, the further away it must be.

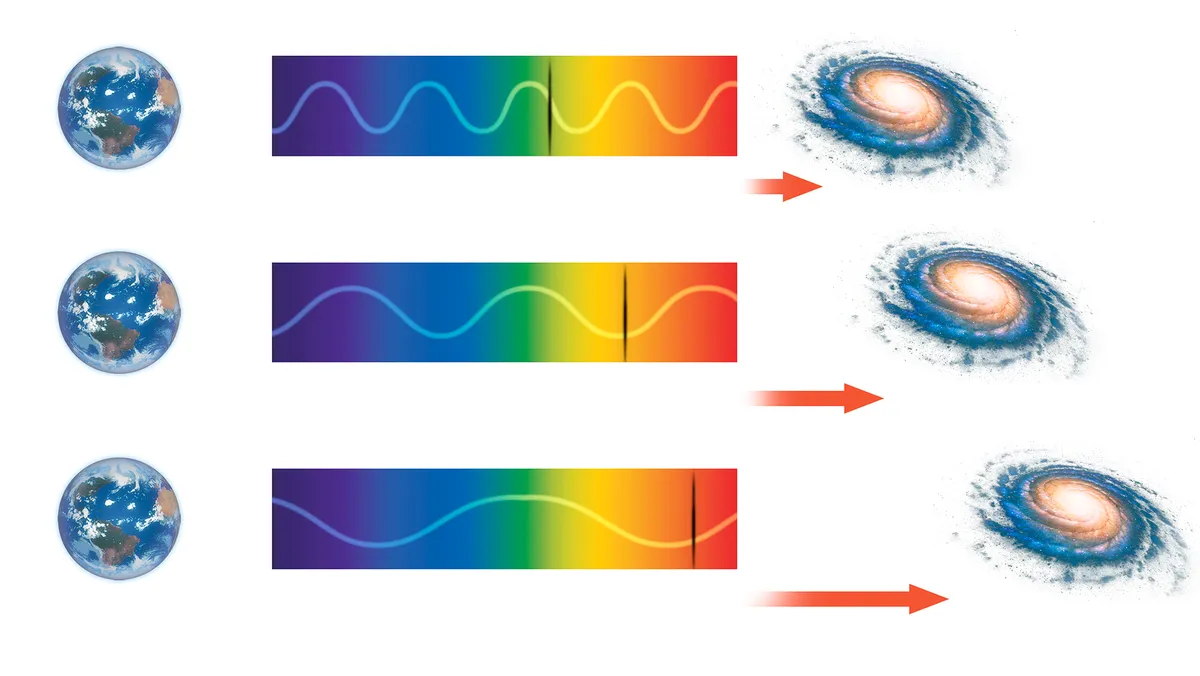

Redshift

Measures Up to 1 bn lightyear

The light from galaxies stretches out as the Universe expands, shifting it towards the colour spectrum’s red end. Edwin Hubble discovered that redshift increases with distance. To work out how far away the furthest galaxies are we just analyse their light.

The Chandrasekhar Limit

Back in the 1930s, 19-year-old physicist Subrahmanyan Chandrasekhar was travelling by boat from his home in India to study in Europe.

During his three-week voyage he passed the time by thinking about objects called white dwarfs, which form when stars like the Sun die.

Chandrasekhar calculated that there is a limit to how heavy a white dwarf can be: 1.4 times the mass of our Sun, a threshold now known as the Chandrasekhar Limit.

"Above this mass a white dwarf cannot be sustained," says Surajit Kalita, from the Indian Institute of Science in Bangalore. "It will burst".

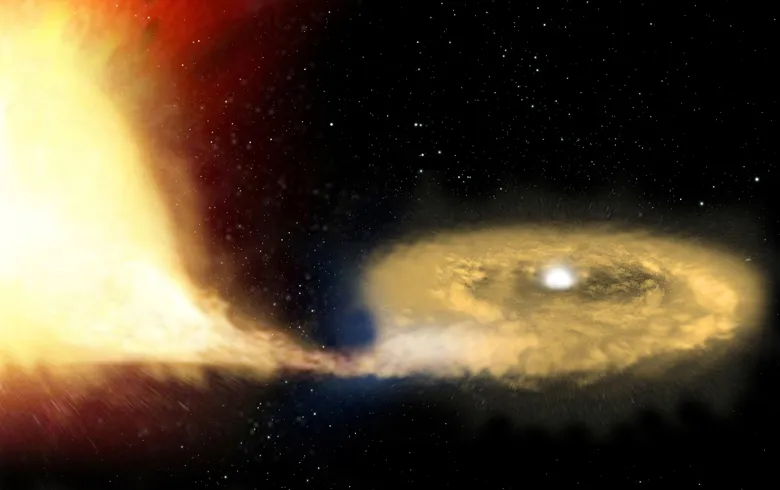

It is this explosion that we see as a Type 1a supernova. The detonations are thought to occur when a white dwarf merges with a neighbouring star or robs material from it by ripping gas away with its strong gravitational pull.

How do Type 1a supernovae work?

Two stars are orbiting one another in a binary star system.

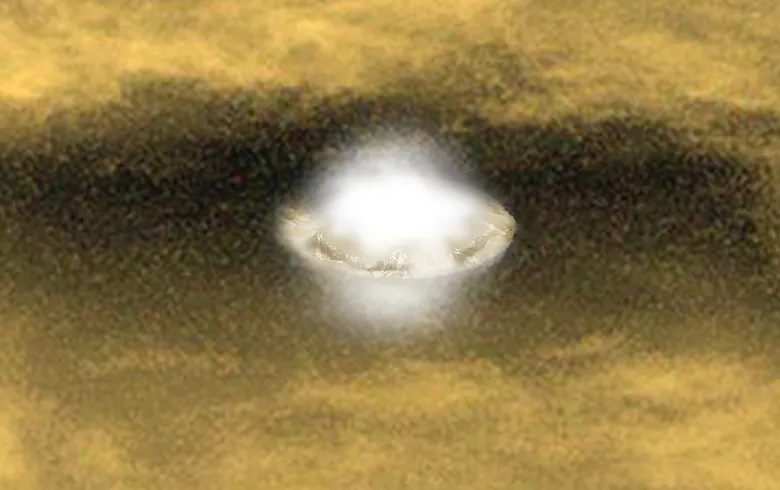

One dies to become a dense white dwarf star.

Its strong gravity allows it to steal material from its neighbour, increasing its own mass in the process.

As the white dwarf’s mass nears the Chandrasekhar Limit, the star shrinks under the new material’s weight.

As the pressure and temperature inside both rise, the white dwarf’s carbon and oxygen fuse into iron.

This turns the white dwarf into a fusion bomb, which soon detonates as a Type 1a supernovae.

It’s a cataclysm so bright that it can be seen halfway across the Universe and it will briefly outshine the entire galaxy it resides in.

After the explosion, the supernova will fade over a period of days to weeks.

The radioactive decay of ejected material allows us to tell the difference between a Type 1a supernova and other non-standard candles.

Units for measuring distances in space

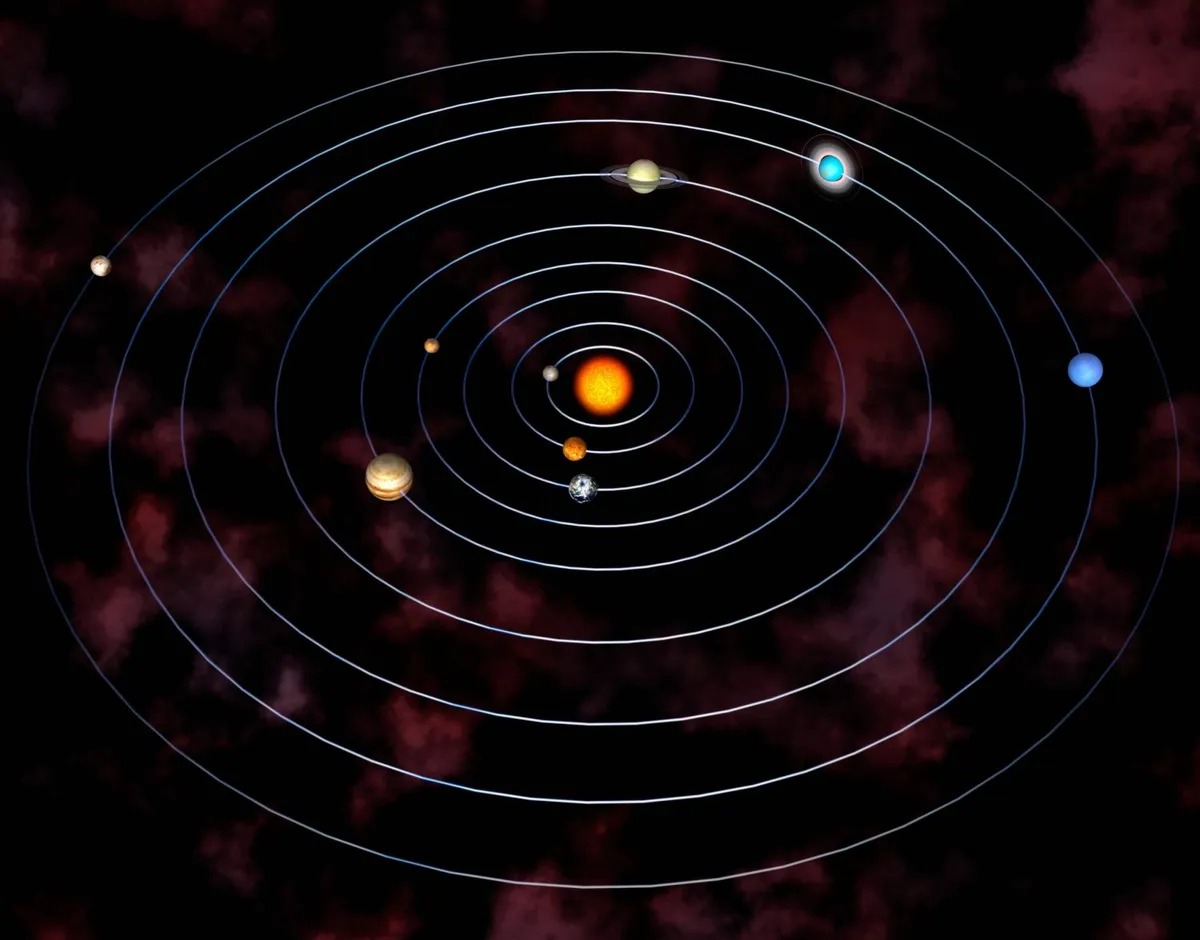

The distances between celestial objects are so mindbogglingly vast that specialist units are needed to chart it.

To express in miles the distance from Earth to the edge of the observable Universe, for example, results in the unwieldy figure of 270,000,000,000,000,000,000,000 (give or take).

Even using mathematical notation to shorten it to 2.7x1023, it’s still so esoteric as to be near meaningless.

What space needs is really, really big units of measurement, so what are the different units for measuring distance in space?

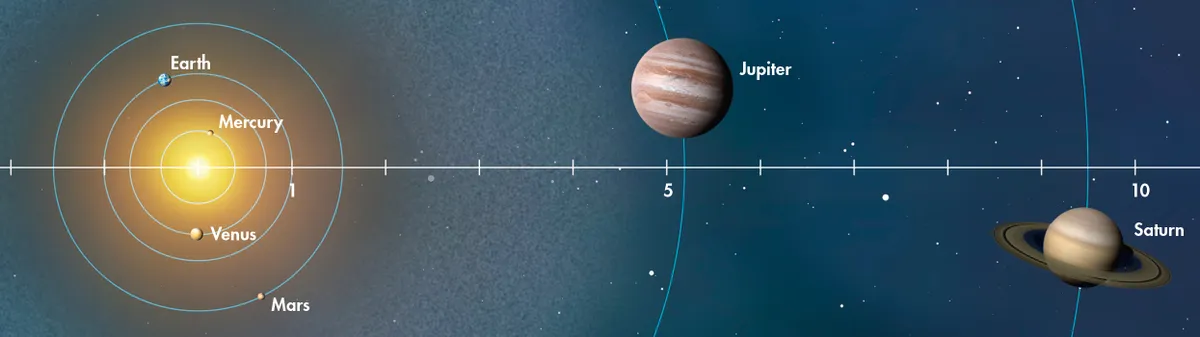

Astronomical Unit

One Astronomical Unit (AU) is equal to the radius of Earth’s orbit around the Sun: or, to be more precise, the average radius, since Earth’s orbit is elliptical.

An AU is defined as 149,597,870,700m or 93 million miles, a value officially set by the International Astronomical Union (IAU) in 2012.

Astronomers have been trying to calculate the distance from Earth to the Sun ever since, in the 3rd century BC, Archimedes estimated it to be around 10,000 times Earth’s radius, or 63,710,000km – so he was nearly halfway there.

Not bad for someone who lived 2,000 years before the telescope was invented.

It wasn’t until 1695 that Christiaan Huygens made the first close guess of 24,000 Earth radii (152,904,000km) though some science historians dismiss his calculations as more luck than judgement, preferring to cite Jean Richer and Giovanni Domenico Cassini’s rigorously calculated 22,000 Earth radii (140,162,000km) as the first scientifically plausible estimate (despite the fact they were further from the mark than Huygens).

Lightyears

A common method for measuring distance in space is to measure how far light travels in one year: known as a lightyear, which is around 9.5 trillion km.

If you want to be precise, the IAU regards a year as 365.25 days, making a lightyear 9,460,730,472,580,800m.

The germ of the concept originated with Friedrich Bessel, who in 1838 made the first successful measurement of the distance to a star outside our Solar System, 61 Cygni.

In his findings he mentioned that light takes 10.3 years to travel from 61 Cygni to Earth.

He wasn’t seriously positing the idea of lightyears as a unit. For one thing the speed of light at the time had yet to be calculated accurately.

However, the concept was too enticing to ignore and by the end of the 19th century it was in general use, even if some astronomers ever since – including Arthur Eddington who called it irrelevant – have been sniffy about its use.

So why is the lightyear useful?Take our nearest extrasolar star, Proxima Centauri.

Instead of expressing its distance in miles (38,624,256,000,000) or AU (258,064.516) – values too vast to grasp meaningfully – we can say it’s 4.25 lightyears away.

Our closest neighbouring galaxy, Andromeda, is over two million lightyears away.

Parsecs

A parsec is roughly 30 trillion km, or a little over three lightyears.Officially, a parsec is the distance at which one astronomical unit subtends an angle of one arcsecond.

This definition would leave most people going “Huh?” But it’s not quite as arcane as it sounds.

The parsec is based on parallax vision.

For a practical example, hold your finger in front of your eyes, then alternate closing each eye; the finger appears to leap from side to side in relation to the background.

Now imagine this on a cosmic scale.

If Earth is on one side of the Sun, when we look at a nearby star, it will appear to be in one position in respect to the stars in the background.

Six months later, when Earth is on the extreme other side of the Sun, that same star will appear to be in a slightly different position against its background.

We’re talking tiny amounts of difference, measured in arcseconds (of which there are 3,600 in one degree of sky).

A parsec is the distance to a star that would appear to move by two arcseconds over a six-month period.

To put it another way, one arcsecond as Earth travels the linear equivalent of 1AU.Hence the name: PARallax, arcSECond.The term first appeared in a 1913 paper by English astronomer Frank Dyson.

This places Proxima Centauri 1.3 parsecs away from us, and the Andromeda Galaxy nearly 800 kiloparsecs.

Kiloparsecs

Hang on – kiloparsecs? Yes, even parsecs aren’t huge enough for some scales, so they’re upscaled to kiloparsecs, megaparsecs and gigaparsecs (one thousand, one million and one billion parsecs respectively).

Which means we can now inform you that the edge of the visible Universe is 14 gigaparsecs away without wearing out the zero key on our keyboard.

Comparing distances in space

| Distance | AUs | Lightyears | Parsecs |

|---|---|---|---|

| The Sun to Earth | 1 | 0.0000158 | 00000485 |

| The Sun to Neptune | 30,047 | 0.00047 | 0.14567197 |

| 1 lightyear | 63,241 | 1 | 0.306601 |

| 1 parsec | 206,265 | 3.26156 | 1 |

| Earth to Proxima Centuari | 58,064.516 | 4.25 | 1.3 |

| Earth to the Andromeda Galaxy | 18,102,690,000 | 2,500,000 | 780,000 |

| Earth to the edge of the visible Universe | 22,888,000,000,000,000 | 46,000,000,000 | 14,000,000,000 |

Parsecs, the Millenium Falcon and the Kessel Run

For many decades, those who understood about the measurement of the Universe would sigh wearily when pulp sci-fi authors mistook lightyears for a measure of time, rather than distance.

However, in a well-known gaffe in the original Star Wars (1977), George Lucas’s script mistakes a parsec for a measure of time, when Han claims the Millennium Falcon “made the Kessel Run in less than 12 parsecs”.

The recent Solo film (rather unconvincingly) tried to retroactively explain away this discrepancy with some nonsense about shortcuts.

Colin Stuart (@skyponderer) is an astronomy author and speaker. Get a free e-book at colinstuart.net/ebook.

Dave Golder is a science journalist and writer.

This article is a combination of two articles that originally appeared in the April 2021 and October 2018 issues of BBC Sky at Night Magazine.